Event Categories

WebSocket events are categorized into seven main types:Connection Events

WebSocket connection lifecycle events (3 events)

Session Events

Session lifecycle events (2 events)

WebRTC Signaling Events

WebRTC signaling exchange (3 events)

Input Events

User speech detection, transcription, and image input (4 events)

Response Events

AI response generation and streaming (4 events)

Function Call Events

External function execution

Error Events

Error notifications and status alerts (4 events)

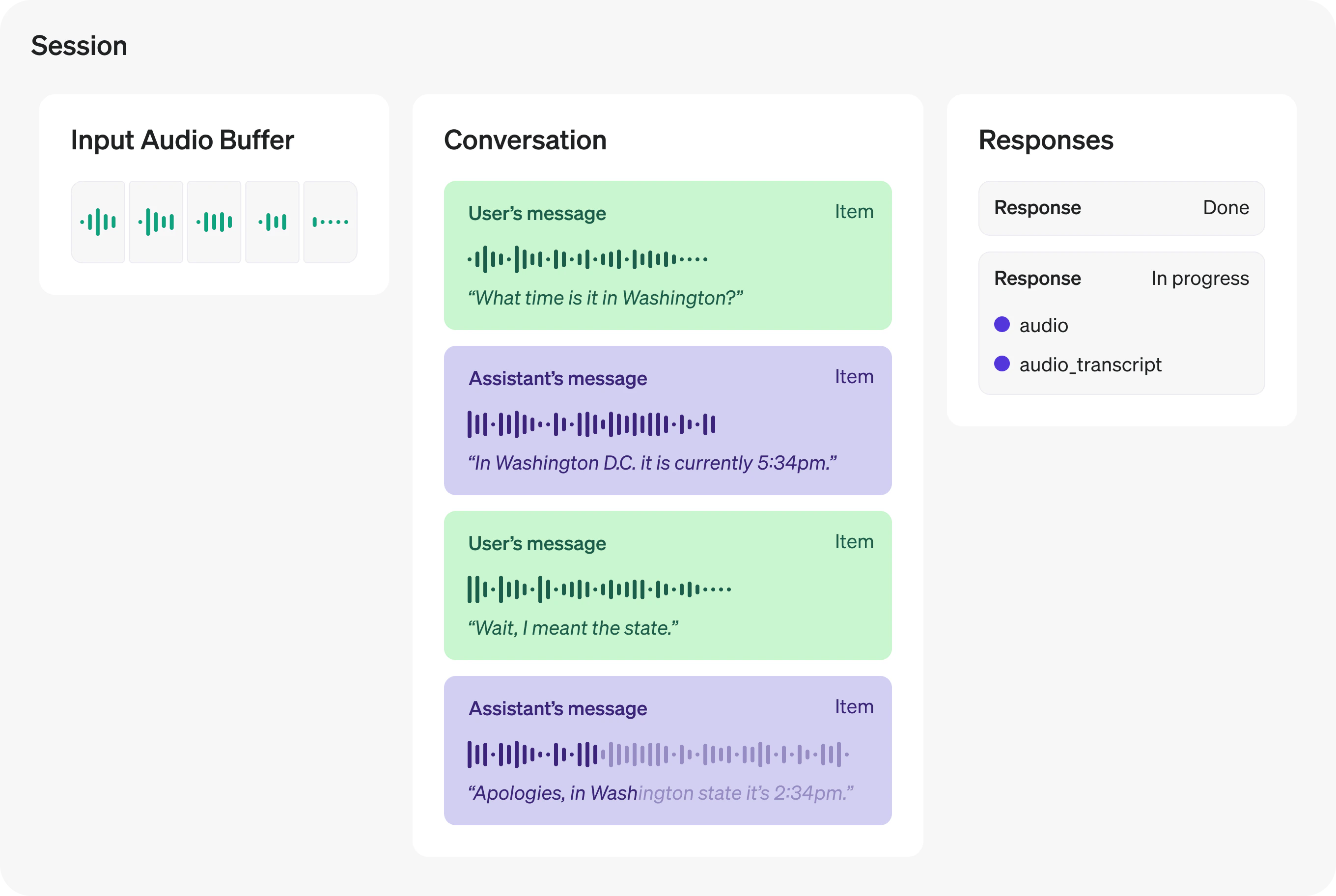

Real-time Session

A real-time session is a stateful interaction between the model and the connected client. The key components of a session are:- Session Object: Controls the parameters of the interaction, such as the model being used, the voice used to generate output, and other configurations.

- Conversation: Represents user input items and model output items generated during the current session.

- Response: Audio or text items generated by the model that are added to the conversation.

Event Flow Overview

Connection & Session Setup Flow

Connection & Session Setup Flow

- Connect WebSocket → Establish connection to

wss://transfer.navtalk.ai/wss/v2/realtime-chat - Send

realtime.input_config→ Send session configuration (voice, prompt, and optionally tools for OpenAI models) immediately inonopenhandler - Receive

conversation.connected.success→ Connection successful, containssessionIdandiceServersfor WebRTC - Receive

realtime.session.created→ Send conversation history - Receive

realtime.session.updated→ Session ready, start sending audio input

conversation.connected.fail, conversation.connected.close, conversation.connected.insufficient_balance, conversation.connected.gpu_full, conversation.connected.connection_limit_exceeded, conversation.connected.backend_error), handle them appropriately and inform the user.User Input Flow

User Input Flow

Audio Input:

- User starts speaking → Receive

realtime.input_audio_buffer.speech_started- Stop AI audio playback

- Clear audio queue

- User continues speaking → Keep sending audio chunks (no events)

- User stops speaking → Receive

realtime.input_audio_buffer.speech_stopped - Transcription complete → Receive

realtime.conversation.item.input_audio_transcription.completed- Display user message in chat (from

data.content) - Save to conversation history

- Display user message in chat (from

- Send image → Send

realtime.input_imagewith camera snapshot- Set

reply: 0for context-building (no immediate response) - Set

reply: 1for visual Q&A (triggers AI response)

- Set

- WebRTC video stream → Video tracks transmitted via WebRTC connection (Method 1)

- Periodic snapshots → Images sent via WebSocket (Method 2)

realtime.input_audio_buffer.speech_started will interrupt the AI response naturally.AI Response Flow

AI Response Flow

- AI starts generating → Receive

realtime.response.audio_transcript.delta(multiple times)- Accumulate text chunks by

id(fromdata.id) - Get content from

data.content - Render markdown in real-time

- Start video playback

- Accumulate text chunks by

- Text complete → Receive

realtime.response.audio_transcript.done- Save complete response to history (from

data.content)

- Save complete response to history (from

- Audio complete → Receive

realtime.response.audio.done- Reset playback flags

id from data.id to track multiple concurrent responses and accumulate content chunks to build the complete message.Function Call Flow

Function Call Flow

- AI determines function needed → Receive

realtime.response.function_call_arguments.done- Parse

arguments(JSON string) andcall_idfromdata

- Parse

- Execute function → Call external API or execute business logic

- Send result → Send

conversation.item.createwithfunction_call_output - Request AI response → Send

response.create - AI processes result → Receive normal response events (

realtime.response.audio_transcript.delta, etc.)

response.create after sending the function call output to trigger AI processing.Complete Conversation Flow

Complete Conversation Flow

A typical conversation cycle:With Function Call:With WebRTC Signaling:

Event Type Constants

All event types are encapsulated using constants. Define them at the beginning of your code:Basic Event Handler

Here’s a basic structure for handling WebSocket events:For detailed information about each event type, click on the event category cards above or navigate to the specific event documentation pages.

Important Notes

Best Practices:

-

Use Event Type Constants: Always use

NavTalkMessageTypeconstants instead of raw strings to avoid typos and make code more maintainable. -

Send Configuration First: Always send

realtime.input_configimmediately after the WebSocket connection opens (in theonopenhandler) before processing any other events: -

Audio Data Format: When sending audio data, always encapsulate it in the

data.audiofield: -

Error Handling: Always implement handlers for connection error events (

CONNECTED_FAIL,CONNECTED_CLOSE,INSUFFICIENT_BALANCE, etc.) to provide proper error feedback to users. -

Event Data Consistency: Some events may have data directly in

nav_data, while others may have nested structures. Always check the specific event documentation for the exact data format.